Agentic AI in 2026: Enterprise Workflows, Skills Compression, and Cyber Risk

The enterprise AI conversation has moved past chat interfaces. In 2026 the real question is whether organizations can operationalize agents that plan, use tools, execute bounded multi-step work, and still remain governable. CIO reporting across engineering, hiring, and security shows the answer is yes in narrow lanes, but only when companies redesign workflows, retrain teams, and harden controls at the same time [1][2][3][4][5].

What Adoption Looks Like Right Now

Agents are moving beyond one-off demos [5]

AI skills are spreading beyond specialist teams [2]

Gartner’s near-term disruption estimate [3]

Governance is now on the critical path [4]

From Copilot to Workflow Engine

The most useful shift in the 2026 discussion is definitional. Agentic AI is no longer being presented as a better autocomplete system. The stronger enterprise framing is that agents can own the first pass on bounded workflows: gathering context, invoking tools, producing an initial draft, routing work to the next system, and surfacing the need for human review. CIO’s reporting on engineering teams captures the change directly: agentic AI is moving into the first-draft stage of the software development life cycle, with humans expected to steer, review, and make the higher-order calls [1].

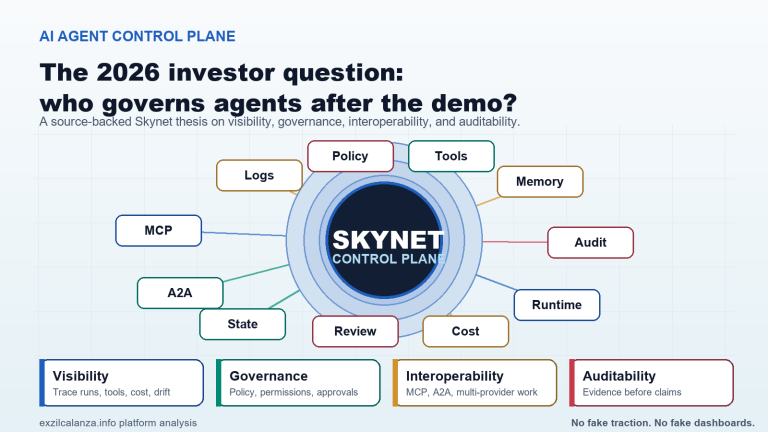

That distinction matters because it changes where value can actually show up. A coding copilot helps an individual contributor. A bounded agent can reduce the coordination burden across planning, implementation, testing, and documentation. That is why the conversation is increasingly about orchestration rather than model quality alone. If the orchestration layer cannot route tasks, control permissions, preserve context, and expose error states in a way humans can supervise, then the company does not really have an execution layer. It has a demo [1].

This is also why the most sober enterprise forecasts are not describing a lights-out future. Even the more optimistic CIO commentary still frames the likely end state as hybrid: agents create speed and scale, while humans remain responsible for judgment, accountability, and final ownership of outcomes. That is a much more realistic operating assumption than the “AI employee” narrative that dominated vendor marketing a year ago [1][5].

Where Agents Are Landing First

| Use Case | Why It Fits | Human Role |

|---|---|---|

| SDLC first drafts | Structured phases, clear handoffs, measurable outputs [1] | Review architecture, approve changes, own release quality |

| Repeatable cross-system workflows | High manual effort and large coordination overhead [5] | Define bounds, permissions, and escalation paths |

| Research and data gathering | Fragmented context can be assembled faster by tool-using agents [5] | Validate findings and make final decisions |

| High-risk regulatory decisions | Poor fit for full autonomy because accountability remains human [5] | Stay human-led, with AI support only |

Deployment Has Started, but Scale Is Still Uneven

One of the clearest signs that the market has matured is that deployment numbers now describe a layered curve instead of a single hype cycle. CIO reports that 26% of organizations already have 11 or more agent projects underway, though roughly half remain in proof-of-concept or pilot phases. That means companies are no longer merely testing whether agents exist; they are testing where they belong, what controls they need, and which domains deserve scale first [5].

The practical lesson from CIO interviews is that not every task deserves an agent. Teams are getting better at identifying a usable “sweet spot”: work that is repeatable, bounded, cross-system, and valuable enough to justify orchestration, yet not so sensitive that final authority cannot stay with a human. In other words, the deployment bottleneck is not raw capability. It is workflow design [5].

“The role is evolving from system owner to workforce orchestrator.”

Hrishikesh Pippadipally, via CIO [5]

That shift in the CIO role is more than rhetoric. Once AI agents are embedded in live operational flows, the CIO becomes responsible not just for technology choices but for how human and digital workers interlock. Permissions, auditability, error handling, adoption, and supervision become management problems, not just engineering ones. This is why agent rollouts that ignore organizational design tend to stall after the demo phase [5].

What the 2026 Skills Data Is Saying

| Signal | Current Reading | Interpretation |

|---|---|---|

| AI-literacy job postings | Growing more than 70% year over year | AI fluency is becoming a mainstream hiring filter [2] |

| Permanent hiring plans | 61% plan to increase permanent headcount in 1H26 | Firms are still hiring, but for a reshaped skill mix [2] |

| Top explicit skills gap | AI and machine learning cited by 45% of IT leaders | Demand is outrunning readiness [2] |

| Job disruption estimate | 32 million jobs per year significantly transformed | Near-term change is redesign, not just layoffs [3] |

The Skills Gap Is Not Theoretical Anymore

The hiring data is one of the strongest indicators that the enterprise AI build-out has moved into an operating phase. CIO reports that job postings requiring AI literacy are growing by more than 70% year over year, while roles with above-average demand include AI and machine learning engineers, cybersecurity engineers, data analysts, DevOps engineers, software engineers, and governance-related positions. The hiring picture is not one of labor collapse; it is one of labor repricing around AI fluency, data capability, and control functions [2].

That matches the wider workforce analysis. Gartner’s estimate, cited by CIO, is that 32 million jobs per year will be significantly transformed by AI in the near term. The same reporting says less than 1% of the 1.4 million layoffs tracked in 2025 were directly caused by AI productivity gains. The immediate pattern is different: hiring avoidance at the low end, role consolidation in workflow-heavy jobs, and more demand for people who can supervise, integrate, secure, and govern automated work [3].

That distinction is important because it changes how companies should respond. If the near-term story is primarily redesign rather than pure replacement, then the winning response is not just cost cutting. It is building an internal capability stack: structured training, role-based learning, cross-functional operating models, and a clear map of which tasks become automated, augmented, or protected from automation. The CIO article on 2026 priorities is direct on this point: organizations cannot hire their way out of the gap; they have to build talent internally [2][4].

Cybersecurity, Privacy, and Governance Are Now the Real Bottlenecks

The fastest way to misunderstand 2026 enterprise AI is to think the next bottleneck is model quality. The more credible reporting says the bottleneck is governance. CIO’s 2026 priorities package puts cybersecurity resilience and data privacy at the top of the list because enterprises are now integrating generative and agentic AI into critical workflows and customer transactions. As TransUnion’s Yogesh Joshi puts it, organizations should expect bad actors to use the same AI technologies to disrupt those workflows and compromise sensitive data [4].

That observation is fully consistent with the operational lessons coming out of early agent deployments. CIO interviews say organizations would bring legal and security teams in earlier, define agent boundaries more clearly, and treat agents more like junior team members than autonomous replacements. Those are not abstract ethics guidelines. They are control requirements. Without explicit ownership, monitoring, and escalation rules, an agent program quickly turns into a hidden operational liability [4][5].

Another governance issue is architectural rather than behavioral. CIO reporting on 2026 priorities emphasizes API-first, modular, loosely coupled foundations and better data quality, lineage, and accessibility. That is what allows agents to remain composable and observable instead of becoming a tangle of opaque prompts inside legacy stacks. In practice, the governance question is now inseparable from the infrastructure question [4].

What a Workable 2026 Rollout Actually Looks Like

A workable rollout pattern is starting to emerge. First, organizations choose high-volume tasks with clear inputs, outputs, and success criteria. Second, they maintain human control over final decisions and high-risk exceptions. Third, they measure value using cycle-time reduction, throughput, accuracy, error rates, and capacity unlocked for higher-value work, not just simplistic labor-savings math. Fourth, they build governance up front rather than after the first incident [1][4][5].

This is less dramatic than the “AI worker replaces teams” narrative, but it is more durable. Enterprise adoption is happening. The evidence is visible in hiring patterns, workflow redesign, and the growing number of active agent programs. But the organizations most likely to realize value are the ones that treat agentic AI as an operating model change, not merely as a software procurement category [1][2][5].

Key Takeaways

- Agentic AI is moving into bounded workflow execution, especially SDLC first drafts and repeatable cross-system processes [1][5].

- Adoption is real but uneven: 26% of organizations already have 11 or more agent projects underway, yet many remain in pilot mode [5].

- The labor impact is best understood as redesign and repricing, not just layoffs: AI-literacy demand is up 70%+ year over year and Gartner sees 32 million jobs per year significantly transformed [2][3].

- Cybersecurity resilience, privacy, and governance now sit on the critical path for enterprise AI value creation [4].

References

- [1] CIO, “How agentic AI will reshape engineering workflows in 2026,” Feb. 20, 2026. [Online]. Available: https://www.cio.com/article/4134741/how-agentic-ai-will-reshape-engineering-workflows-in-2026.html

- [2] CIO, “State of IT jobs: AI sparks rapidly changing market for skills,” Mar. 2026. [Online]. Available: https://www.cio.com/article/4134254/state-of-it-jobs-ai-sparks-rapidly-changing-market-for-skills.html

- [3] CIO, “AI’s workforce impact has only just begun,” Mar. 10, 2026. [Online]. Available: https://www.cio.com/article/4142699/ais-workforce-impact-has-only-just-begun.html

- [4] CIO, “10 top priorities for CIOs in 2026,” Jan. 2026. [Online]. Available: https://www.cio.com/article/4117023/10-top-priorities-for-cios-in-2026.html

- [5] CIO, “How the growing AI workforce is changing the CIO role,” Feb. 11, 2026. [Online]. Available: https://www.cio.com/article/4126383/how-the-growing-ai-workforce-is-changing-the-cio-role.html